Network Connections and Protocols

Network Connections and Protocols

In order to improve the structure of networks, the International Standardization Organization (ISO) developed a so-called reference model for Open Systems Interconnection, the OSI reference model, in 1977. This model comprises seven well- defined layers: the transport functions are distributed over four layers and the data- processing layers comprise the topmost three. The data-processing layers are often bundled and are known as the application layer.

The basic idea behind this model is that each layer provides certain services for the one above. A layer thus shields the higher layers from details, such as how the corresponding services are realized. Between every two layers, there is an interface that allows defined operations and offers services.

Modern protocols are still viewed from the perspective of this tried-and-tested model, even if they have not been implemented in five or seven layers. It allows them to be compared and differentiated from other protocols.

In this book, the protocols used are divided into two basic categories derived from the OSI model. Both are crucial to terminal server operation:

-

Transport protocols Conventions for handling and converting data streams for exchange between computers over a physical network cable, a fiber optic cable, or a wireless network. These protocols correspond to the transport- oriented functions of the OSI model. Examples of transport protocols include TCP/IP, IPX/SPX, or NetBEUI.

-

Communication protocols Encryption of function calls for certain tasks to be run on a remote computer. Corresponds to some of the functions that can be attributed to the application layer of the OSI model described in the preceding bullet. These functions include interprocess communication methods and the terminal server protocols Microsoft RDP and Citrix ICA. Even though the X11 protocol is used mostly under UNIX operating systems for remote access to applications, it belongs in this category as well.

Terminal servers on a network can support several transport and communication protocols in parallel. It is thus important to know which protocols exist with the corresponding communication mechanisms and for what purpose they are used. If required, you can install missing protocols later or remove superfluous protocols anytime to adapt to a changing network environment.

The communication protocols for the terminal server listed earlier are all described in detail in this book. Let us briefly touch upon transport protocols and interprocess communication.

Transport Protocols

A transport protocol refers to the four bottom layers of the OSI model, that is, the transport functions. These functions include determining the right path to the target computer and adapting the format of the data packet for transport to another network, as well as establishing the end-to-end connection between two or more communication partners.

TCP/IP

The TCP/IP transport protocol has become a standard over the past 10 years and is therefore very important for Windows Server 2003 in general, and for a terminal server in particular. This transport protocol is most often the basis for the connection to terminal server clients via the various communication protocols.

TCP/IP consists of a set of subprotocols arranged in a layer model. The communication can either be connection-oriented (TCP) or connectionless (UDP).

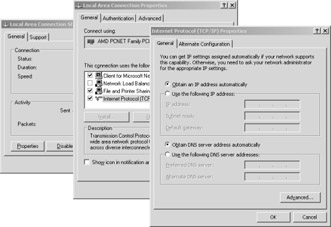

Figure 3-1: TCP/IP address configuration.

Under Windows Server 2003, you configure the TCP/IP protocol in the Control Panel using the Network Connections icon. As administrator, you can set the properties of the local area connections and the TCP/IP protocol.

NWLink and IPX/SPX

The NetWare-compatible protocol NWLink was introduced under Microsoft Windows NT 3.5. It corresponds to Novell’s IPX/SPX and can transport data over a network in a way very similar to TCP/IP. Windows Server 2003 add-on products such as Citrix MetaFrame allow the use of IPX/SPX as an alternative to TCP/IP for communication between Terminal Services and specially adapted clients.

You install the NWLink protocol on Windows Server 2003 through Start\Control Panel\Network Connections and then select the network card to configure. In the LAN connection properties, select the Install button to open the new network components dialog box. Select NWLink IPX/SPX/NetBIOS Compatible Transport Protocol.

The NWLink protocol is no longer supported in the 64-bit version of Windows Server 2003. However, this does not affect Microsoft’s remote desktop protocol for connecting terminal servers, because it cannot use this protocol as a transport medium. This is not the case for Citrix ICA: it can use various transport protocols— for example, the NWLink protocol.

NetBEUI

The former standard protocol used by Microsoft and IBM in their network operating systems was NetBEUI (NetBIOS Enhanced User Interface). NetBEUI was also supported by Windows 2000, Windows NT, Windows 98, and OS/2, but it did not allow routing on the network. However, this protocol was very easy to install and manage. Before the success of TCP/IP, NetBEUI was the preferred protocol, particularly for smaller networks. Now, under Windows Server 2003, NetBEUI is no longer used and does not exist in the system.

| Note? |

The starting point for the development of NetBEUI was the NetBIOS interface (Network Basic Input/Output System). NetBIOS applications do not necessarily need the NetBEUI protocol and can run via TCP/IP or IPX/SPX. |

Interprocess Communication

In a distributed environment, data must be exchanged bi-directionally between different server and client components. Windows Server 2003 offers nine possibilities for this process: named pipes, mail slots, Windows sockets, remote procedure calls, NetBIOS, NetDDE, server message blocks, DCOM (COM+), and SOAP.

Although some of these options are already dated, they often play an important role on a terminal server, even if the terminal server is running under Windows Server 2003. Why are they still important? Because the modern terminal server must still run several older Windows-based applications. Large companies often need to support many self-developed and critical applications for a long time. For them, it is essential that as many conventional interprocess communication methods as possible work properly. For this reason, we will now describe the most important methods (except for named pipes, mail slots, and NetDDE).

Windows Sockets

The Windows socket interface allows communication between distributed applications via protocols with different addressing systems. The types currently supported by Windows Sockets include TCP/IP and NWLink (IPX/SPX). Originally, they were derived from the Berkeley Sockets, a standard interface to develop client/server applications under UNIX and many other platforms.

| Important? |

A terminal server communicates with its clients via Windows Sockets and not via the server service. |

Remote Procedure Calls

The remote procedure calls (RPCs) concept was originally developed by Sun Microsystems to invoke processes on a remote computer as if they were executed locally. It was designed so that the developers did not have to take care of network communication details. Therefore, RPCs use different basic methods, such as Windows Sockets, and they encapsulate the actual communication in a simpler schema.

Under Windows Server 2003, remote procedure calls and their local variant, local procedure calls, play a very important role. However, these calls usually run in the background and offer administrators an indirect means of influence only. Higher services for interprocess communication use RPCs quite often.

NetBIOS

The NetBIOS interface has been used since the beginning of the eighties to develop distributed applications. NetBIOS applications can communicate via different protocols:

-

NetBIOS over TCP/IP–NetBT Communication based on the TCP/IP protocol.

-

NWLink NetBIOS–NWNBLink Communication based on the IPX/SPX protocol.

The NetBIOS interface consists of fewer than 20 simple commands that handle data exchange. In particular, NetBIOS handles the request and response functions between client and server components. This applies to Windows Server 2003, as well. Therefore, seamless support of NetBIOS functions on a terminal server is necessary, especially for older applications because they were usually not developed using current Internet standards.

Resources for the NetBIOS interface are identified via their UNC names (Universal Naming Convention) with the format \\Computer\Share. You can now access the files, folders, and printers released under these names.

| Important? |

The NetBIOS name (also known as Microsoft computer name) does not need to be identical to the TCP/IP name (also known as TCP/IP host name or DNS name) that might exist on the same computer. However, you are strongly urged not to use different naming conventions for the two services. This often leads to confusion for both users and administrators. Starting with Windows 2000, however, the difference between the two name spaces has been virtually eliminated. |

CIFS and SMB

The Common Internet File System is for remote access of PCs running a Windows OS to the file system of another computer. The basic mechanism has been better known as Server Message Blocks (SMB) since the early eighties. The SMB functions include session control, file access, printer control, and messages. Groups of requests to the NetBIOS interface are bundled and transmitted to a target computer.

Only when SMBs were ratified as an open X/Open standard called CIFS was the concept accepted on a wider basis. Since 1996, Microsoft has used official specifications and publications to actively promote the use of CIFS.

Unlike other file systems, CIFS provides its data to users, not computers. The user is authenticated on the server and not the client. Special authentication servers (for example, domain controllers) can be used for this across the network.

| Note? |

On a terminal server, SMB functions are often used to link users’ basic directories and to print documents on network printers. |

DCOM and COM+

The basic idea behind a distributed environment is to develop applications and services as components. By using the component principle it is possible to break down monolithic applications into predefined components based on standards that are simpler to build and maintain. If you adhere strictly to this principle, you will end up with small, independent, reusable, and self-contained units. Before the Microsoft .NET strategy was introduced, this procedure was possible through the Component Object Model (COM). COM enabled the development of binary software components and led to uniform communication between several of these components. A developer would be able to put together an application by reusing certain components according to the modular design.

So-called middleware such as COM relieves the developer of communication mechanism details, enables the use of familiar techniques, and offers a uniform application programming interface (API). Middleware therefore represents an abstraction layer between the application and the operating system, and it allows the modules of a distributed application to communicate with each other.

The COM components are anchored directly in the operating system. They are thus available for each application with defined interfaces and can be used freely. The problem, however, is that an application might no longer function or might have only limited function if the required COM components are no longer installed on the system.

COM is still widely used today. For years, it has been the basis for the historical Object Linking and Embedding (OLE) and for the ActiveX technology. It was expanded by Distributed COM (DCOM), the corresponding standard for communication across computer boundaries. Before the .NET Framework was introduced, the entire distributed-applications strategy was based on the DCOM model and its successor, COM+. It is therefore found in many established software products.

On a terminal server, the DCOM model places high demands on the system architecture it is built on. Different users communicate with their applications via DCOM, if required. All communication channels are safely allocated to their corresponding user sessions at all times on Windows Server 2003.

SOAP

The simple object access protocol (SOAP) is a protocol based on XML. SOAP exchanges data in a decentralized and distributed environment and consists of two core components: an envelope for supporting expansions and modular requirements, and an encryption mechanism for managing data within the envelope. It does not require a synchronous execution or direct interaction based on request/response mechanisms, but it allows the use of different protocols normally used for communication between distributed software components.

SOAP is one of the basic elements of the .NET strategy and thus plays a dominant role in Windows Server 2003.

Names and Name Resolution

How do computers obtain their unique names? How do they find each other on the network? How does a user identify required resources on a network? How does a terminal server communicate with other network computers? These issues are resolved through varying concepts, all of which are based on attributing logical names to individual computers and resources.

In the course of development of TCP/IP, two techniques emerged for converting logical TCP/IP names to IP addresses:

-

Hosts file This file exists on all computers. It contains a simple line-by-line list in the form of IP address = Computer name. Because this table needs to be updated for each computer in case of any modification (for example, a new computer is installed), this type of name resolution is very time-consuming, labor-intensive, and error-prone.

-

DNS (Domain Name Service) A central name server hosts a database containing TCP/IP names and the corresponding IP addresses. Clients or specially configured servers request and receive the IP address of a certain TCP/IP name from this name server.

Note? There are also corresponding name resolution mechanisms for NetBIOS: the Lmhosts file (LAN manager hosts file), or WINS (Windows Internet Name Service). These mechanisms are obsolete today.

Domain Name Service

Programs and users rarely reference computers and other resources by their binary network addresses. Instead, they use logical ASCII character strings, for example, www.microsoft.com. A computer on a network, however, understands only addresses such as 192.44.2.1. Therefore, we need mechanisms to convert from one convention to the other. This is particularly true for connecting clients to their terminal servers.

In the beginning, the Internet predecessor DARPANET needed only one simple ASCII file that listed all computer names and IP addresses. This soon became obsolete as thousands of computers became linked over the Internet. A new concept was needed that addressed, in particular, increasingly large file sizes and name conflicts when connecting new computers. This concept resulted in the Domain Name System (DNS).

The essence of DNS is the introduction of a hierarchical, domain-based name schema and a distributed database system for implementing that name schema. It is used primarily for mapping computer names to IP addresses. If configured accordingly, DNS can also map IP addresses to their logical names (reverse lookup). A globally registered computer name is called a Fully Qualified Domain Name (FQDN).

In this context, a domain consists of a logical structure of computers. Within a domain, there can be subdomains that are used for structuring comprehensive networks. The owner of a domain is responsible for the administration of subdomains by means of a domain name server.

If a network is very extensive, it is further divided into zones. A zone defines which Internet names are physically managed in a certain zone file. A DNS server can manage one or more zones. A zone does not necessarily contain all subdomains of a domain. Accordingly, a large domain can be divided into several zones that are managed separately. All modifications to a zone are performed on an assigned DNS server.

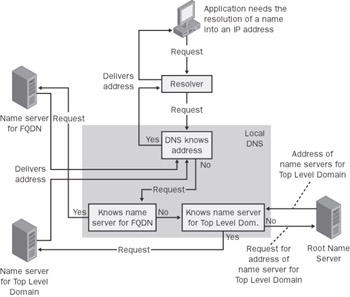

Figure 3-2: Name resolution through DNS.

Most network concepts, including Active Directory directory service under Windows Server 2003, are based on DNS. An expansion allows mapping and registering new IP addresses to logical computer names automatically at runtime. This so-called “dynamic DNS” is used mainly in combination with DHCP.

| Important? |

Whether a terminal server functions properly or not is highly dependent on an adequate DNS infrastructure. It is often the correct configuration of the DNS server, not its platform, that makes the difference. |

DHCP

Because IP addresses must be unique, an administrator cannot arbitrarily map them. As a result, managing address mapping for terminal server clients on a corporate network can require significant personnel and time. For this reason, the dynamic host configuration protocol (DHCP) is often used in terminal server environments. It is a simple procedure that dynamically configures computers on TCP/IP networks from a remote location. With each logon, DHCP maps preset IP addresses and assigns IP addresses from a pool.

Three values are essential for successfully sending and receiving TCP/IP data packets between two computers:

-

IP address Unique identification of the network device, for example, 192.168.1.2.

-

Subnet mask Bit pattern used to distinguish network ID and host ID within the IP address, for example, 255.255.255.0.

-

Standard gateway Router that receives a data packet if the target computer is not in the same subnet as the source computer. The router forwards the data packet via routing tables to its destination.

So how can a client that does not have these values be configured over the network? The client sends a broadcast TPC/IP packet over the network that always contains the MAC address of its network adapter. This broadcast is received by one or more DHCP servers, and one or more of the DHCP servers replies to the client with a message that they can provide IP configuration data. The client then sends a broadcast requesting the desired configuration data. This request contains the MAC address of the DHCP server that answered first. This prevents the other servers from sending configuration data as well. The client receives its configuration data from the selected server, confirms receipt, and configures itself at runtime. The amount of data exchanged is usually less than 2 KB, and the entire communication usually takes under a second.

The DHCP configuration values may not be allotted to a client permanently, only for a certain amount of time. This allows IP addresses to be mapped from a pool. This pool can be smaller than the potential number of client computers. If a client is still active on the network after half its allotted time has passed, it can request an extension from the DHCP server. If the original DHCP server is unavailable, the client attempts to contact another DHCP server after 87.5 percent of its allotted time has passed.

If the configuration values of an IP address have changed since they were last assigned to a client, the DHCP server can deny an extension. Usually, however, the server instructs the client to release the address and requests new configuration data. In this way, configurations on the entire network can be modified without modifying the clients themselves. The administrative effort for clients is therefore minimal. All active DHCP clients on a corporate network can be reconfigured remotely within a predefined amount of time. This is especially useful for terminal server environments, because maintenance work for their clients is greatly reduced.

You can implement the dynamic DNS concept under Windows Server 2003 combined with the DHCP service. After a DHCP server maps an IP address, the data is forwarded to the DNS server, at which point it is automatically entered in the appropriate database. Because this technique eliminates the need to manually configure name resolution, it is often used with terminal server clients.

Routing

The IP schema for network addresses allows mapping an identity to each connected computer. However, to manage network routing, you need one additional identification, the network Media Access Control (MAC) address. A MAC address is globally unique and therefore unmistakable, and it is usually predefined in the hardware of each network adapter.

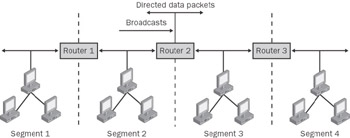

The pathway from one terminal server on the network to a remote client is known as routing. It is usually realized by tables on a host or by routers as special network components. Routers can connect IP subnets, use several protocols, and forward data packets to their target subnets (for example, a workstation at home or a remote terminal server). A router supports only packets with a specific target address. The routing tables can be manually predefined or dynamically generated at runtime. Dynamic routers use a series of standardized protocols for different requirements.

The router components include, at a minimum, a table with IP addresses of subnets and information on how to forward the packets of these subnets. Broadcasts that might overload the network are filtered out upon transfer from one subnet (segment) to the other.

Figure 3-3: Three routers separating four network segments. The routers filter the broadcasts.

Therefore, a data packet transversing a router can be:

-

Forwarded to a local network, if it belongs to the subnet

-

Forwarded via another network card to another network over another router

-

Rejected at the router, if the router does not have any information on how to forward this packet

Furthermore, many routers can be configured so that, in spite of their original function, they allow broadcasts on certain TCP/IP ports. This enables several broadcast-based functions to be used on a large and structured network (for example, DHCP).

| Important? |

You should open only those TCP/IP ports on routers within intranets that are sufficiently protected by a firewall. Otherwise, the danger of illegal access from the Internet is too great. |

Therefore, correct router configuration is vital, especially for terminal servers on large local networks or over long distances. The settings of an ISDN router determine the network’s capacity and the services provided for a connected workstation at home. However, communication paths over too many router components can cause timeout problems.