VoiceXML

VoiceXML

Voice eXtensible Markup Language (VoiceXML) is an XML-based language for creating voice-enabled applications. It provides a standard way for developers to extend enterprise data and Web content to a new medium. Just as HTML describes the visual interface for a Web browser, VoiceXML describes the voice interface for a voice browser, allowing for audio input and output. VoiceXML leverages the Internet for development and delivery, making it easy for developers to add voice integration to existing systems.

The major goal of VoiceXML is to bring the advantages of Web-based development and content delivery to IVR systems. To do this, VoiceXML brings together many technologies, including speech recognition, keypad input, synthesized speech, digitized audio, and audio recordings. The result is an efficient and robust way to create userfriendly voice applications.

History of VoiceXML

Even though VoiceXML is a relatively new technology, it already has quite a history. In 1995, a group of researchers at AT&T Research were working to discover ways to use the Internet for telephony applications. The goal was to devise a system that could deliver Web content and services to ordinary phones. Over the next several years, the research continued, although in separate projects, in separate companies. AT&T, Lucent, and Motorola were all working on essentially the same thing: voice Internet.

By 1999, AT&T and Lucent each had its own version of the Phone Markup Language (PML), and Motorola had developed a technology called VoxML. At the same time, IBM was working on a similar technology called SpeechML. It quickly became clear that a joint solution was required if the voice Web market was going to succeed; consequently, these companies created an organization called the VoiceXML Forum (www.voicexml.org). Its members used the best features of each proprietary technology (with the majority of the syntax coming for Motorola's VoxML), along with some additions, to create the first version of VoiceXML, 0.9.

After VoiceXML 0.9 was published, the growing community of VoiceXML Forum members made huge improvements to the language, resulting in the release of VoiceXML 1.0 in March 2000. This release was well received, leading to several VoiceXML 1.0-compliant product offerings. With this initial success, the VoiceXML Forum submitted VoiceXML 1.0 to the W3C for consideration. With the future of the language in its hands, the W3C's Voice Browser Working Group put together version 2.0 of VoiceXML, which is now an official W3C recommendation. Even with all of the improvements to version 2.0, it is still very similar to version 1.0, making application upgrades trivial.

The VoiceXML Forum continues to flourish. Along with the founding members—AT&T, Lucent, Motorola, and IBM—there are close to 70 promoter members and almost 400 supporters of the technology! This is quite an accomplishment in just over three years. With such broad industry support, VoiceXML is destined to change the face of voice application development. It will not be long before we see a variety of consumer-oriented voice portals, as well as corporate applications, taking advantage of this flexible language.

Design Goals

The developers of VoiceXML had several goals, many centered on how VoiceXML relates to Internet architecture and development. Here are some of the top areas where VoiceXML benefits from the Internet:

-

VoiceXML is an XML-based language. This allows it to take advantage of the powerful Web development tools on the market. It also allows developers to use existing skill sets to build voice-based applications.

-

VoiceXML applications are easy to deploy. Unlike many of the proprietary Interactive Voice Response (IVR) systems, VoiceXML servers can be placed anywhere on the Internet, taking advantage of common Internet server-side technologies.

-

The server logic and presentation logic can be cleanly separated. This allows VoiceXML applications to take advantage of existing business logic and enterprise integration. Using a common back end allows the development of different forms of presentation logic based on the requesting device.

-

VoiceXML applications are platform-independent. Developers do not have to worry about making VoiceXML applications work on multiple browsers over multiple networks. When developing VoiceXML applications, the only concern is making sure it works with the VoiceXML browser being used. This leads to quicker development and less maintenance when compared to wireless Internet and desktop Web applications.

VoiceXML Architecture

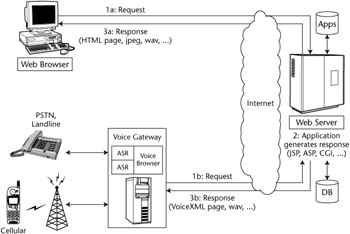

VoiceXML uses an architecture similar to that of Internet applications. The main difference is the requirement for a VoiceXML gateway. Rather than having a Web browser on the mobile device, VoiceXML applications use a voice browser on the voice gateway. The voice browser interprets VoiceXML and then sends the output to the client on a telephone, eliminating the need for any software on the client device. Being based on Internet technology makes voice application development much more approachable than previous voice systems.

Figure 15.1 shows the architecture of a VoiceXML system and an Internet application. Showing both on the same diagram clearly shows the similarity between the two solutions. As you can see, the VoiceXML application does have some additional complexity when compared to the Internet application. Instead of using the standard request/response mechanism used in Internet applications, the VoiceXML application goes through additional steps on the voice gateway. Let's go through the steps of a sample voice interaction.

Figure 15.1: VoiceXML architecture.

Figure 15.1: VoiceXML architecture.

Just as Internet users enter a URL to access an application, VoiceXML users dial a telephone number. Once connected, the public switched telephone network (PSTN) or cellular network communicates with the voice gateway. The gateway then forwards the request over HTTP to a Web server that can service the request (Figure 15.1-1b). On the server, (Figure 15.1-2), standard server-side technologies such as JSP, ASP, or CGI can be used to generate the VoiceXML content, which is then returned to the voice gateway (Figure 15.1-3b). On the gateway, a voice browser interprets the VoiceXML code using a voice browser. The content is then spoken to the user over the telephone using prerecorded audio files or digitized speech. If user input is required at any point during the application cycle, it can be entered via either speech or tone input using Dual-Tone Multifrequency (DTMF). This entire process will occur many times during the use of a typical application.

As just stated, the main difference between Internet applications and VoiceXML applications is the use of a voice gateway. It is at this location where the voice browser resides, incorporating many important voice technologies, including Automatic Speech Recognition (ASR), telephony dialog control, DTMF, text-to-speech (TTS) and prerecorded audio playback. According to the VoiceXML 2.0 specification, a VoiceXML platform must support the following functions in order to be complete:

-

Document acquisition. The voice gateway is responsible for acquiring VoiceXML documents for use within the voice browser. This can be accomplished within the context of another VoiceXML document or by external events, such as receiving a phone call. When issuing an HTTP request to a Web server, the gateway has to identify itself using the User-Agent variable in the HTTP header, providing both the browser name and the version number: "<name>/<version>".

-

Audio output. Two forms of audio output must be supported: text-to-speech (TTS) and prerecorded audio files. Text-to-speech has to be generated on the fly, based on the VoiceXML content. The resulting digitized speech often sounds robotic, making it difficult to comprehend. This is where prerecorded audio files come into play. Application developers can prerecord the application output to make the voice sound more natural. The audio files can reside on a Web server and be referred to by a universal resource identifier (URI).

-

Audio input. The voice gateway has to be able to recognize both character and spoken input. The most common form of character input is DTMF. This input type is best suited for entering passwords, such as PIN numbers, or responses to choice menus. Unfortunately, DTMF input is quite limited. It does not work well for entering data that is not numeric, leading to the requirement for speech recognition.

-

Transfer. The voice gateway has to be capable of making a connection to a third party. This most commonly happens through a communications network, such as the telephone network.

When it comes to speech recognition, the Automatic Speech Recognition (ASR) engine has to be capable of recognizing input dynamically. A user will often speak commands into the telephone, which have to be recognized and acted upon. The set of suitable inputs is called a grammar. This set of data can either be directly incorporated to the VoiceXML document or referenced to an external location by a URI. It is important to provide "tight" grammars so the speech recognition engine can provide accurate speech recognition in noisy environments, such as over a cell phone.

In addition to speech recognition, the gateway also has to be able to record audio input. This capability is useful for applications that require open dictation, such as notes associated with a completed work order.

| Note |

Speech recognition is not the same as voice recognition. Speech recognition will work for nearly any voice, and does not have to be trained for individual users. It picks up speech patterns, rather than voice inflections. Voice recognition is more commonly used as a form of authentication to identify individual users. |