Setting Up an NFS File Server in Red Hat Linux

Setting Up an NFS File Server in Red Hat Linux

Instead of representing storage devices as drive letters (A, B, C, and so on), as they are in Microsoft operating systems, Red Hat Linux connects file systems from multiple hard disks, floppy disks, CD-ROMs, and other local devices invisibly to form a single Linux file system. The Network File System (NFS) facility lets you extend your Red Hat Linux file system in the same way, to connect file systems on other computers to your local directory structure as well.

| Cross-Reference? |

See Chapter 10 for a description of how to mount local devices on your Linux file system. The same command (mount) is used to mount both local devices and NFS file systems. |

Creating an NFS file server is an easy way to share large amounts of data among the users and computers in an organization. An administrator of a Red Hat Linux system that is configured to share its file systems using NFS has several things to do to get NFS working:

-

Set up the network — If a LAN or other network connection is already connecting the computers on which you want to use NFS (using TCP/IP as the network transport), you already have the network you need.

-

On the server, choose what to share — Decide which file systems on your Linux NFS server to make available to other computers. You can choose any point in the file system to make all files and directories below that point accessible to other computers.

-

On the server, set up security — You can use several different security features to suit the level of security with which you are comfortable. Mount-level security lets you restrict the computers that can mount a resource and, for those allowed to mount it, lets you specify whether it can be mounted read/write or read-only. With user-level security, you map users from the client systems to users on the NFS server. In this way, users can rely on standard Linux read/write/execute permissions, file ownership, and group permissions to access and protect files.

-

On the client, mount the file system — Each client computer that is allowed access to the server's NFS shared file system can mount it anywhere the client chooses. For example, you may mount a file system from a computer called maple on the /mnt/maple directory in your local file system. After it is mounted, you can view the contents of that directory by typing ls /mnt/maple. Then you can use the cd command below the /mnt/maple mount point to see the files and directories it contains.

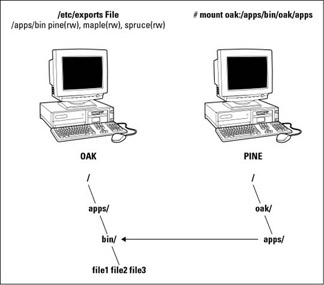

Figure 18-1 illustrates a Linux file server using NFS to share (export) a file system and a client computer mounting the file system to make it available to its local users.

Figure 18-1: NFS can make selected file systems available to other computers.

In this example, a computer named oak makes its /apps/bin directory available to clients on the network (pine, maple, and spruce) by adding an entry to the /etc/exports file. The client computer (pine) sees that the resource is available, then mounts the resource on its local file system at the mount point /oak/apps. At this point, any files, directories, or subdirectories from /apps/bin on oak are available to users on pine (given proper permissions).

Although it is often used as a file server (or other type of server), Red Hat Linux is a general-purpose operating system. So, any Red Hat Linux system can share file systems (export) as a server or use other computer's file systems (mount) as a client. Contrast this with dedicated file servers, such as NetWare, which can only share files with client computers (such as Windows workstations) and will never act as a client.

Many people use the term file system rather loosely. A file system is usually a structure of files and directories that exists on a single device (such as a hard disk partition or CD-ROM). When I talk about the Linux file system, however, I am referring to the entire directory structure (which may include file systems from several disks or NFS resources), beginning from root (/) on a single computer. A shared directory in NFS may represent all or part of a computer's file system, which can be attached (from the shared directory down the directory tree) to another computer's file system.

Sharing NFS file systems

To share an NFS file system from your Red Hat Linux system, you need to export it. Exporting is done in Red Hat Linux by adding entries into the /etc/exports file. Each entry identifies the directory in your local file system that you want to share. The entry identifies the other computers that can share the resource (or opens it to all computers) and includes other options that reflect permissions associated with the directory.

Remember that when you share a directory, you are sharing all files and subdirectories below that directory as well (by default). So, you need to be sure that you want to share everything in that directory structure. There are still ways to restrict access within that directory structure (those methods are described later).

Red Hat provides a graphical tool for configuring NFS called the NFS Server Configuration window (redhat-config-nfs command). The following sections describe how to use the NFS Server Configuration window to share directories with other computers, and then describes the underlying configuration files that are changed to make that happen.

Using the NFS Server Configuration window

The NFS Server Configuration window (redhat-config-nfs command) allows you to share your NFS directories using a graphical interface. Start this window from the main Red Hat menu by clicking System Settings ? Server Settings ? NFS.

To share a directory with the NFS Server Configuration window, do the following:

-

From the NFS Server Configuration window, click File ? Add Share. The Add NFS Share window appears, as shown in Figure 18-2.

Figure 18-2: Identify a directory to share and access permissions with the NFS Server Configuration window. -

In the Add NFS Share window Basic tab, type the following information:

-

Directory — Type the name of the directory you want to share. (The directory must exist before you can add it.)

-

Host(s) — Enter one or more host names to indicate which hosts can access the shared directory. Host names, domain names, and IP addresses are allowed here. Separate each name with a space. (See the "Host names in /etc/exports" section later in this chapter for valid host names.) Add an asterisk (*) to place no restrictions on which hosts can access this directory.

Note? Although I've just described how it should work, adding multiple host names does not work properly at the moment. If you are adding more than a single host, domain name, or IP address, you may need to either create separate shares for each one you share to, or edit the /etc/exports file by hand, as described in the following sections.

-

Basic permissions — Click Read-only or Read/Write to let remote computers mount the shared directory with read access only or read/write access, respectively.

-

-

Click the General Options tab. This tab lets you add options that define how the shared directory behaves when a remote host connects to it (mounts it):

-

Allow connections from ports 1024 and higher — Normally, an NFS client will request the NFS service from a port number under 1024. Select this option if you need to allow a client to connect to you from a higher port number. (This sets the insecure option.) To allow a Mac OS X computer to mount a shared NFS directory, you must have the insecure option set for the shared directory.

-

Allow insecure file locking — If checked, NFS will not authenticate any locking requests from remote users of this shared directory. Older NFS clients may not deliver their credentials when they ask for a file lock. (This sets the insecure_locks option.)

-

Disable subtree checking — By selecting this option, NFS won't verify that the requested file is actually in the shared directory (only that it's in the correct file system). You can disable subtree checking if an entire file system is being shared. (This sets the no_subtree_check option.)

-

Sync write operations on request — This is on by default, which forces a write operation from a remote client to be synced on your local disk when the client requests it. (This sets the sync option.)

-

Force sync of write operations immediately — If keeping the shared data immediately up-to-date is critical, select this option to force the immediate synchronization of writes to your hard disk. (This sets the no_wdelay option.)

-

-

Click the User Access tab, then select any of the following options:

-

Treat the remote root user as local root — If this option is on, it enables the remote root user host accessing your shared directory to save and modify files as though he or she were the local root user. Having this on is a security risk, since the remote user can potentially modify critical files. (This sets the no_root_squash option.)

-

Treat all client users as anonymous users — When this option is on, you can indicate that particular user and group IDs be assigned to every user accessing the shared directory from a remote computer. Enter the user ID and group ID you want assigned to all remote users. (This sets the anonuid and anongid options to the numbers you choose.)

-

-

Click OK. The new shared directory appears in the NFS Server Configuration window.

At this point, the configuration file (/etc/exports) should have the shared directory entry created in it. To turn on the NFS service and make the shared directory available, type the following from a Terminal window as root user:

-

To immediately turn on NFS, type:

# service nfs start

-

To permanently turn on the NFS service, type:

# chkconfig nfs on

-

If you have a firewall configured, you must ensure that UDP ports 111 and 2049 are accepting requests. (See Chapter 10 for information on configuring your iptables or ipchains firewall.)

The next few sections describe the /etc/exports file you just created. At this point, a client can only use your shared directory if he mounts it on his local file system. Refer to the "Using NFS file systems" section later in this chapter.

Configuring the /etc/exports file

The shared directory information you entered into the NFS Server Configuration window is added to the /etc/exports file. As root user, you can use any text editor to configure the /etc/exports file to modify shared directory entries or add new ones. Here is an example of an /etc/exports file, including some entries that it could include:

/cal *.linuxtoys.net(rw) # Company events /pub (ro,insecure,all_squash) # Public dir /home maple(rw,squash uids=0-99) spruce(rw,squash uids=0-99)

The following text describes those entries:

-

/cal — Represents a directory that contains information about events related to the company. It is made accessible to everyone with accounts to any computers in the company's domain (*.linuxtoys.net). Users can write files to the directory as well as read them (indicated by the rw option). The comment (# Company events) simply serves to remind you what the directory contains.

-

/pub — Represents a public directory. It allows any computer and user to read files from the directory (indicated by the ro option), but not to write files. The insecure option enables any computer, even one that doesn't use a secure NFS port, to access the directory. The all_squash option causes all users (UIDs) and groups (GIDs) to be mapped to the nfsnobody user, giving them minimal permission to files and directories.

-

/home — This entry enables a set of users to have the same /home directory on different computers. Say, for example, that you are sharing /home from a computer named oak. The computers named maple and spruce could each mount that directory on their own /home directory. If you gave all users the same user name/UIDs on all machines, you could have the same /home/user directory available for each user, regardless of which computer they logged into. The uids=0–99 is used to exclude any administrative login from another computer from changing any files in the shared directory.

Of course, you can share any directories that you choose (these were just examples), including the entire file system (/). There are security implications of sharing the whole file system or sensitive parts of it (such as /etc). Security options that you can add to your /etc/exports file are described throughout the sections that follow.

The format of the /etc/exports file is:

Directory Host(Options) # Comments

Directory is the name of the directory that you want to share. Host indicates the host computer that the sharing of this directory is restricted to. Options can include a variety of options to define the security measures attached to the shared directory for the host. (You can repeat Host/Option pairs.) Comments are any optional comments you want to add (following the # sign).

Host names in /etc/exports

You can indicate in the/etc/exports file which host computers can have access to your shared directory. Be sure to have a space between each host name. Here are ways to identify hosts:

-

Individual host — You can enter one or more TCP/IP host names or IP addresses. If the host is in your local domain, you can simply indicate the host name. Otherwise, you can use the full host.domain format. These are valid ways of indicating individual host computers:

maple maple.handsonhistory.com 10.0.0.11

-

IP network — To allow access to all hosts from a particular network address, indicate a network number and its netmask, separated by a slash (/). These are valid ways of indicating network numbers:

10.0.0.0/255.0.0.0 172.16.0.0/255.255.0.0 192.168.18.0/255.255.255.0

-

TCP/IP domain — Using wildcards, you can include all or some host computers from a particular domain level. Here are some valid uses of the asterisk and question mark wildcards:

*.handsonhistory.com *craft.handsonhistory.com ???.handsonhistory.com

The first example matches all hosts in the handsonhistory.com domain. The second example matches woodcraft, basketcraft, or any other host names ending in craft in the handsonhistory.com domain. The final example matches any three-letter host names in the domain.

Note? Using an asterisk doesn't match subdomains. For example, *.handsonhistory.com would not cause the host name mallard.duck.handsonhistory.com to be included in the access list. Also, separate multiple host names with spaces, but if you add options after each host name, leave no spaces between the host name and the parens. For example:

*.handsonhistory.com(rw) *.example.net(ro)

-

NIS groups — You can allow access to hosts contained in an NIS group. To indicate a NIS group, precede the group name with an at (@) sign (for example, @group).

Access options in /etc/exports

You don't have to just give away your files and directories when you export a directory with NFS. In the options part of each entry in /etc/exports, you can add options that allow or limit access by setting read/write permission. These options, which are passed to NFS, are as follows:

-

ro — Only allow the client to mount this exported file system read-only. The default is to mount the file system read/write.

-

rw — Explicitly ask that a shared directory be shared with read/write permissions. (If the client chooses, it can still mount the directory read-only.)

User mapping options in /etc/exports

Besides options that define how permissions are handled generally, you can also use options to set the permissions that specific users have to NFS shared file systems.

One method that simplifies this process is to have each user with multiple user accounts have the same user name and UID on each machine. This makes it easier to map users so that they have the same permission on a mounted file system as they do on files stored on their local hard disk. If that method is not convenient, user IDs can be mapped in many other ways. Here are some methods of setting user permissions and the /etc/exports option that you use for each method:

-

root user — Normally, the client's root user is mapped into the nfsnobody user name (UID 65534). This prevents the root user from a client computer from being able to change all files and directories in the shared file system. If you want the client's root user to have root permission on the server, use the no_root_squash option.

Tip? There may be other administrative users, in addition to root, that you want to squash. I squash_uids=0–99.

-

nfsnobody user/group — By using nfsnobody user name and group name, you essentially create a user/group whose permissions will not allow access to files that belong to any real users on the server (unless those users open permission to everyone). However, files created by the nfsnobody user or group will be available to anyone assigned as the nfsnobody user or group. To set all remote users to the nfsnobody user/group, use the all_squash option.

The nfsnobody user is assigned to UIDs and GIDs of 65534. This prevents the ID from running into a valid user or group ID. Using anonuid or anongid options, you can change the nfsnobody user or group, respectively. For example, anonuid=175 sets all anonymous users to UID 175 and anongid=300 sets the GID to 300. (Only the number is displayed when you list file permission, however, unless you add entries with names to /etc/password and /etc/group for the new UIDs and GIDs.)

-

User mapping — If the same users have login accounts for a set of computers (and they have the same IDs), NFS, by default, will map those IDs. This means that if the user named mike (UID 110) on maple has an account on pine (mike, UID 110), from either computer he could use his own remotely mounted files from the other computer.

If a client user that is not set up on the server creates a file on the mounted NFS directory, the file is assigned to the remote client's UID and GID. (An ls -l on the server would show the UID of the owner.) You can identify a file that contains user mappings using the map_static option.

Tip? The exports man page describes the map_static option, which should let you create a file that contains new ID mappings. These mappings should let you remap client IDs into different IDs on the server.

Exporting the shared file systems

After you have added entries to your /etc/exports file, you can actually export the directories listed using the exportfs command. If you reboot your computer or restart the NFS service, the exportfs command is run automatically to export your directories. However, if you want to export them immediately, you can do so by running exportfs from the command line (as root).

| Tip? |

It's a good idea to run the exportfs command after you change the exports file. If any errors are in the file, exportfs will identify those errors for you. |

Here's an example of the exportfs command:

# /usr/sbin/exportfs -a -v exporting maple:/pub exporting spruce:/pub exporting maple:/home exporting spruce:/home exporting *:/mnt/win

The -a option indicates that all directories listed in /etc/exports should be exported. The -v option says to print verbose output. In this example, the /pub and /home directories from the local server are immediately available for mounting by those client computers that are named (maple and spruce). The /mnt/win directory is available to all client computers.

Running the exportfs command temporarily makes your exported NFS directories available. To have your NFS directories available on an ongoing basis (that is, every time your system reboots), you need to set your nfs start-up scripts to run at boot time. This is described in the next section.

Starting the nfs daemons

For security purposes, the NFS service is turned off by default on your Red Hat Linux system. You can use the chkconfig command to turn on the NFS service so that your files are exported and the nfsd daemons are running when your system boots.

There are two start-up scripts you want to turn on for the NFS service to work properly. The nfs service exports file systems (from /etc/exports) and starts the nfsd daemon that listens for service requests. The nfslock service starts the lockd daemon, which helps allow file locking to prevent multiple simultaneous use of critical files over the network.

You can use the chkconfig command to turn on the nfs service by typing the following commands (as root user):

# chkconfig nfs on # chkconfig nfslock on

The next time you start your computer, the NFS service will start automatically and your exported directories will be available. If you want to start the service immediately, without waiting for a reboot, you can type the following:

# /etc/init.d/nfs start # /etc/init.d/nfslock start

The NFS service should now be running and ready to share directories with other computers on your network.

Using NFS file systems

After a server exports a directory over the network using NFS, a client computer connects that directory to its own file system using the mount command. The mount command is the same one used to mount file systems from local hard disks, CDs, and floppies. Only the options to give to mount are slightly different.

Mount can automatically mount NFS directories that are added to the /etc/fstab file, just as it does with local disks. NFS directories can also be added to the /etc/fstab file in such a way that they are not automatically mounted. With a noauto option, an NFS directory listed in /etc/fstab is inactive until the mount command is used, after the system is up and running, to mount the file system.

Manually mounting an NFS file system

If you know that the directory from a computer on your network has been exported (that is, made available for mounting), you can mount that directory manually using the mount command. This is a good way to make sure that it is available and working before you set it up to mount permanently. Here is an example of mounting the /tmp directory from a computer named maple on your local computer:

# mkdir /mnt/maple # mount maple:/tmp /mnt/maple

The first command (mkdir) creates the mount point directory (/mnt is a common place to put temporarily mounted disks and NFS file systems). The mount command then identifies the remote computer and shared file system separated by a colon (maple:/tmp). Then, the local mount point directory follows (/mnt/maple).

| Note? |

If the mount failed, make sure the NFS service is running on the server and that the server's firewall rules don't deny access to the service. From the server, type ps ax | nfsd. You should see a list of nfsd server processes. If you don't, try to start your NFS daemons as described in the previous section. To view your firewall rules, type ipchains -L or iptables -L depending on which firewall service you are using (see Chapter 14 for a description of firewalls). By default, the nfsd daemon listens for NFS requests on port number 2049. Your firewall must accept udp requests on ports 2049 (nfs) and 111 (rpc). |

To ensure that the mount occurred, type mount. This command lists all mounted disks and NFS file systems. Here is an example of the mount command and its output:

# mount /dev/hda3 on / type ext3 (rw) none on /proc type proc (rw) none on /dev/pts type devpts (rw,gid=5,mode=620) usbdevfs on /proc/bus/usb type usbdevfs (rw) maple:/tmp on /mnt/maple type nfs (rw,addr=10.0.0.11)

The output from the mount command shows your mounted disks and NFS file systems. The first output line shows your hard disk (/dev/hda3), mounted on the root file system (/), with read/write permission (rw), with a file system type of ext3 (the standard Linux file system type). The /proc, /dev/pts, and usbdevfs mount points represent special file system types. The just-mounted NFS file system is the /tmp directory from maple (maple:/tmp). It is mounted on /mnt/maple and its mount type is nfs. The file system was mounted read/write (rw) and the IP address of maple is 10.0.0.11 (addr=10.0.0.11).

What I just showed is a simple case of using mount with NFS. The mount is temporary and is not remounted when you reboot your computer. You can also add options to the mount command line for NFS mounts:

-

-a — Mount all file systems in /etc/fstab (except those indicated as noauto).

-

-f — This goes through the motions of (fakes) mounting the file systems on the command line (or in /etc/fstab). Used with the -v option, -f is useful for seeing what mount would do before it actually does it.

-

-r — Mounts the file system as read-only.

-

-w — Mounts the file system as read/write. (For this to work, the shared file system must have been exported with read/write permission.)

The next section describes how to make the mount more permanent (using the /etc/fstab file) and how to select various options for NFS mounts.

Automatically mounting an NFS file system

To set up an NFS file system to mount automatically each time you start your Red Hat Linux system, you need to add an entry for that NFS file system to the /etc/fstab file. The /etc/fstab file contains information about all different kinds of mounted (and available to be mounted) file systems for your Red Hat Linux system.

The format for adding an NFS file system to your local system is the following:

host:directory mountpoint????nfs options????0 0

The first item (host:directory) identifies the NFS server computer and shared directory. mountpoint is the local mount point on which the NFS directory is mounted, followed by the file system type (nfs). Any options related to the mount appear next in a comma-separated list. (The last two zeros just tell Red Hat Linux not to dump the contents of the file system and not to run fsck on the file system.)

The following are two examples of NFS entries in /etc/fstab:

maple:/tmp /mnt/maple nfs rsize=8192,wsize=8192 0 0 oak:/apps /oak/apps nfs noauto,ro 0 0

In the first example, the remote directory /tmp from the computer named maple (maple:/tmp) is mounted on the local directory /mnt/maple (the local directory must already exist). The file system type is nfs, and read (rsize) and write (wsize) buffer sizes are set at 8192 to speed data transfer associated with this connection. In the second example, the remote directory is /apps on the computer named oak. It is set up as an NFS file system (nfs) that can be mounted on the /oak/apps directory locally. This file system is not mounted automatically (noauto), however, and can be mounted only as read only (ro) using the mount command after the system is already running.

| Tip? |

The default is to mount an NFS file system as read/write. However, the default for exporting a file system is read-only. If you are unable to write to an NFS file system, check that it was exported as read/write from the server. |

Mounting noauto file systems

In your /etc/fstab file are devices for other file systems that are not mounted automatically (probably /dev/cdrom and /dev/fd0, for your CD-ROM and floppy disk devices, respectively). A noauto file system can be mounted manually. The advantage is that when you type the mount command, you can type less information and have the rest filled in by the contents of the /etc/fstab file. So, for example, you could type:

# mount /oak/apps

With this command, mount knows to check the /etc/fstab file to get the file system to mount (oak:/apps), the file system type (nfs), and the options to use with the mount (in this case ro for read-only). Instead of typing the local mount point (/oak/apps), you could have typed the remote file system name (oak:/apps) instead, and had other information filled in.

| Tip? |

When naming mount points, including the name of the remote NFS server in that name can help you remember where the files are actually being stored. This may not be possible if you are sharing home directories (/home) or mail directories (/var/spool/mail). |

Using mount options

You can add several mount options to the /etc/fstab file (or to a mount command line itself) to impact how the file system is mounted. When you add options to /etc/fstab, they must be separated by commas. The following are some options that are valuable for mounting NFS file systems:

-

hard — With this option on, if the NFS server disconnects or goes down while a process is waiting to access it, the process will hang until the server comes back up. This option is helpful if it is critical that the data you are working with not get out of sync with the programs that are accessing it. (This is the default behavior.)

-

soft — If the NFS server disconnects or goes down, a process trying to access data from the server will timeout after a set period of time when this is on.

-

rsize — The number of bytes of data read at a time from an NFS server. The default is 1024. Using a larger number (such as 8192) will get you better performance on a network that is fast (such as a LAN) and is relatively error-free (that is, one that doesn't have a lot of noise or collisions).

-

wsize — The number of bytes of data written at a time to an NFS server. The default is 1024. Performance issues are the same as with the rsize option.

-

timeo=# — Sets the time after an RPC timeout occurs that a second transmission is made, where # represents a number in tenths of a second. The default value is seven-tenths of a second. Each successive timeout causes the timeout value to be doubled (up to 60 seconds maximum). You should increase this value if you believe that timeouts are occurring because of slow response from the server or a slow network.

-

retrans=# — Sets the number of minor retransmission timeouts that occur before a major timeout. When a major timeout occurs, the process is either aborted (soft mount) or a Server Not Responding message appears on your console.

-

retry=# — Sets how many minutes to continue to retry failed mount requests, where # is replaced by the number of minutes to retry. The default is 10,000 minutes (which is about one week).

-

bg — If the first mount attempt times out, try all subsequent mounts in the background. This option is very valuable if you are mounting a slow or sporadically available NFS file system. By placing mount requests in the background, Red Hat Linux can continue to mount other file systems instead of waiting for the current one to complete.

Note? If a nested mount point is missing, a timeout to allow for the needed mount point to be added occurs. For example, if you mount /usr/trip and /usr/trip/extra as NFS file systems, if /usr/trip is not yet mounted when /usr/trip/extra tries to mount, /usr/trip/extra will time out. Hopefully, /usr/trip will come up and /usr/trip/extra will mount on the next retry.

-

fg — If the first mount attempt times out, try subsequent mounts in the foreground. This is the default behavior. Use this option if it is imperative that the mount be successful before continuing (for example, if you were mounting /usr).

Any of the values that don't require a value can have no appended to it to have the opposite effect. For example, nobg indicates that the mount should not be done in the background.

Unmounting NFS file systems

After an NFS file system is mounted, unmounting it is simple. You use the umount command with either the local mount point or the remote file system name. For example, here are two ways you could unmount maple:/tmp from the local directory /mnt/maple.

# umount maple:/tmp # umount /mnt/maple

Either form will work. If maple:/tmp is mounted automatically (from a listing in /etc/fstab), the directory will be remounted the next time you boot Red Hat Linux. If it was a temporary mount (or listed as noauto in /etc/fstab), it will not be remounted at boot time.

If you get the message "device is busy" when you try to unmount a file system, it means the unmount fails because the file system is being accessed. Most likely, one of the directories in the NFS file system is the current directory for your shell (or the shell of someone else on your system). The other possibility is that a command is holding a file open in the NFS file system (such as a text editor). Check your Terminal windows and other shells, and cd out of the directory if you are in it, or just close the Terminal windows.

If an NFS file system won't unmount, you can force unmount it (umount -f /mnt/maple) or unmount and clean up later (umount -l /mnt/maple). The -l option is usually the better choice, since a force unmount can disrupt a file modification that is in progress.

Other cool things to do with NFS

You can share some directories to make it consistent for a user to work from any of several different Linux computers on your network. Some examples of useful directories to share are:

-

/var/spool/mail — By sharing this directory from your mail server, and mounting it on the same directory on other computers on your network, users can access their mail from any of those other computers. This saves the user from having to download messages to their current computer or from having to log in to the server just to get mail. There is only one mailbox for each user, no matter from where it is accessed.

-

/home — This is a similar concept to sharing mail, except that all users have access to their home directories from any of the NFS clients. Again, you would mount /home on the same mount point on each client computer. When the user logs in, that user has access to all the user's startup files and data files contained in the /home/user directory.

Tip? If your users rely on a shared /home directory, you should make sure that the NFS server that exports the directory is fairly reliable. If /home isn't available, the user may not have the startup files to log in correctly, or any of the data files needed to get work done. One workaround is to have a minimal set of startup files (.bashrc, .Xdefaults, and so on) available in the user's home directory when the NFS directory is not mounted. Doing so allows the user to log in properly at those times.

-

/project — Although you don't have to use this name, a common practice among users on a project is to share a directory structure containing files that people on the project need to share. This way everyone can work on original files and keep copies of the latest versions in one place.

-

/var/log — An administrator can keep track of log files from several different computers by mounting the /var/log file on the administrator's computer. (Each server may need to export the directory to allow root to be mapped between the computers for this to work.) If there are problems with a computer, the administrator can then easily view the shared log files live.

If you are working exclusively with Red Hat Linux and other UNIX systems, NFS is probably your best choice for sharing file systems. If your network consists primarily of MS Windows computers or a combination of systems, you may want to look into using Samba for file sharing.